There was lots of talk at Tech-Ed last week about concurrency and parallel programming. It’s becoming a real issue because we’re in the middle of a shift in the way Moore’s Law works for us. Up until around 2002, we were getting a “free lunch” because processors were getting faster and more powerful and our software would just run faster without us having to do anything. Recently, however, physical limits are being approached which slow down this effect. Nowadays we increase processing power by adding more and more “cores”. In order to take advantage of these, our programs must divide their workload into tasks that can be executed in parallel rather than sequentially.

There was lots of talk at Tech-Ed last week about concurrency and parallel programming. It’s becoming a real issue because we’re in the middle of a shift in the way Moore’s Law works for us. Up until around 2002, we were getting a “free lunch” because processors were getting faster and more powerful and our software would just run faster without us having to do anything. Recently, however, physical limits are being approached which slow down this effect. Nowadays we increase processing power by adding more and more “cores”. In order to take advantage of these, our programs must divide their workload into tasks that can be executed in parallel rather than sequentially.

We’ve got a big problem on our hands, though, because writing concurrent programs is not easy, even for experts, and we, as mere software developers are not trained to do it well. The closest that a lot of developers get is to manage the synchronisation of access to shared data by multiple threads in a web application.

This is a real screen shot of Windows Task Manager on a machine with 64 Cores and 2TB Memory. I wouldn’t be surprised if we have entry level 8-core machines next year. If we don’t do anything to take advantage of these extra cores, we may as well just have a single core machine.

This is a real screen shot of Windows Task Manager on a machine with 64 Cores and 2TB Memory. I wouldn’t be surprised if we have entry level 8-core machines next year. If we don’t do anything to take advantage of these extra cores, we may as well just have a single core machine.

There have been thread synchronisation and asynchronous primitives in the .Net framework since the beginning, but using these correctly is notoriously difficult. Having to work at such a low level means that you typically have to write a huge amount of code to get it working properly. That code gets in the way of, and detracts from, what the program is really trying to do.

Not anymore! There is a whole range of new technologies upon us that are designed to help us write applications that can take advantage of multi-core and many-core platforms. These include new parallel extensions for both managed and unmanaged code (although I won’t go into the unmanaged extensions), and a new language (F#) for the .Net Framework.

Parallel FX (PFX)

The first of these technologies is collectively known as Parallel FX (PFX) and is now on its 3rd CTP drop. It’s deeply integrated into the .Net Framework 4.0 which is scheduled for release with Visual Studio 2010, and consists of Parallel LINQ (PLINQ) and the Task Parallel Library (TPL). The latest CTP (including VS2010 and .Net 4.0) was released on 31st October and a VPC image of it can be downloaded here (although it’s 7GB so you may want to check out Brian Keller’s post for a suggestion on how to ease the pain!).

This is all part of an initiative by Microsoft to allow developers to concentrate more on the “what” of software development than on the “how”. It means that a developer should be able to declaratively specify what needs to be done and not to worry too much about how that actually happens. LINQ was a great step in that direction and PLINQ takes it further by letting the framework know that a query can be run in parallel. Before we talk about PLINQ, though, lets look at the TPL.

Task Parallel Library (TPL)

The basic unit of parallel execution in the TPL is the Task which can be constructed around a lambda. The static Parallel.For() and Parallel.ForEach() methods create a Task for each member of the source IEnumerable and distribute execution of these across the machine’s available processors using User Mode Scheduling. Similarly, Parallel.Invoke() does the same for each of it’s lambda parameters.

A new TaskManager class allows full control over how the Tasks are constructed, managed and scheduled for execution, with multiple instances sharing a single scheduler in an attempt to throttle over-subscription.

The TPL helps developers write multi-threaded applications by providing assistance at every level. For instance, LazyInit<T> helps you to manage the lazy initialisation of (potentially shared) data within your application.

Additionally, a bunch of thread-safe collections (such as BlockingCollection<T>, ConcurrentDictionary<T>, ConcurrentQueue<T> and ConcurrentStack<T>) help with various parallelisation scenarios including multiple producer and consumer (parallel pipeline) paradigms.

Finally, there’s an amazing debugger experience with all of this. You can view (in a fancy WPF tool window) all of your Tasks and the route they take through your application. Moving around Tasks is no longer a game of Thread hide-and-seek!

Parallel LINQ (PLINQ)

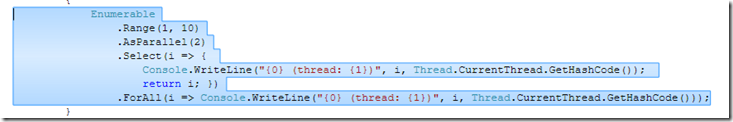

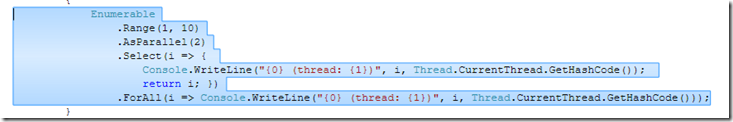

Setting up a query for parallel execution is easy – you just wrap the IEnumerable<T> in an IParallelEnumerable<T> by calling the AsParallel() extension method.

In the latest CTP the overloads of AsParallel() have changed slightly making it even simpler to use. In the example above I’ve specified a degree of parallelism indicating that I want the query to execute over 2 processors, but you can leave that up to the underlying implementation by not specifying any parameters.

The ForAll() extension method, shown above, allows you to specify a (thread-safe) lambda that is also executed in parallel – allowing for pipelined parallelism in both the running of the query and the processing of the results.

Running the example above produces the output below. Although it’s running on a single proc VPC, you can still see that it’s using 2 threads (hash codes 1 & 3). What’s interesting is that each item is both selected and enumerated on the same thread – this is really going to help performance by reducing the amount of cross thread traffic.

Exception handling in the latest CTP is also slightly different from earlier releases. Tasks have a Status property which indicates how the task completed (or if it was cancelled) and potentially holds an exception that may have been thrown from the Task’s lambda. Any unhandled and unobserved exceptions will get marshalled off the thread before garbage collection and are allowed to propagate up the stack.

Daniel Moth did an excellent presentation on PFX at Tech-Ed which you can watch here.

F#

So what about F# then? Well, it’s a multi-paradigm language that has roots in ML (it’s oCAML compatible if you omit the #light directive). So it’s very definitely a first-class Functional language – but it also has Type semantics allowing it to integrate fully with the .Net Framework. If C# is imperative with some functional thrown in, then F# is functional with some imperative thrown in (both languages can exhibit Object Orientation).

Data and functions are equivalent and can be passed around by value. This, coupled with immutability by default, means that side-effect free functions can be easily composed together in both synchronous and asynchronous fashion and executed in parallel with impunity. All-in-all this makes it very powerful for addressing some of the modern concurrent programming problems. It’s not for every problem that’s for sure – C# is often a better choice – but the fact that it can be called seamlessly from any .Net language makes it easy to write an F# library for some specialised tasks.

Luke Hoban and Bart de Smet did some great talks at Tech-Ed on parallel programming using F#, and it blew me away how appropriate F# is for problems like these. I’m really getting into the whole F# thing so I’ll save it for another post next week.

Having done Biology at University rather than Computer Science, I regret having spent most of my career not really understanding why functional programming is so important. I knew it was the oldest paradigm around, but assumed that it was just for mathematicians and scientists – business software needed something far better: imperative programming and object-orientation. How naive was I?

Having done Biology at University rather than Computer Science, I regret having spent most of my career not really understanding why functional programming is so important. I knew it was the oldest paradigm around, but assumed that it was just for mathematicians and scientists – business software needed something far better: imperative programming and object-orientation. How naive was I?We’ll leave a discussion about why functional programming is so good for a future post. In the meantime, I can recommend both these books. “Foundations” is more readable, and “Expert” more thorough (neither assume any previous F# knowledge).

There was lots of talk at Tech-Ed last week about concurrency and parallel programming. It’s becoming a real issue because we’re in the middle of a shift in the way

There was lots of talk at Tech-Ed last week about concurrency and parallel programming. It’s becoming a real issue because we’re in the middle of a shift in the way